What is Event-Driven Architecture?

Event-Driven Architecture refers to reacting to a notably defined change of state vs. reacting to direct Data Manipulation Language (DML) statements. Event-Driven Architecture aims to break that reaction process out of reviewing the data, choosing what action should be taken and then taking that action. Triggers/flows/process builders are still required to make the decision that an event has occurred, but that work can now be handled separately in an event-defined context.

How Is This Achieved in Salesforce

Salesforce offers Platform Events. Platform Events give the ability to create a custom event message that acts very similarly to a custom object in the sense that various custom field types associated with an event can be defined. The uniqueness comes from the fact that when a platform event message is created in Salesforce, it only lasts within the system for 24 hours. There are different behavior settings for how defined events get published, but the core benefit is that when a trigger is created off a platform event, the events run asynchronously to each other instead of running within the same execution context. Platform Events are not just limited to Apex, and subscribers with Flows and Process builders can also be created. All of these run mutually exclusive to each other.

Why Change to this model?

This model may not sound very different from what triggers are doing based on DML operation, however, this methodology offers a few advantages:

1) Horizontal Scaling – More processes are being tied to the events that are occurring vs. looking at the objects involved. If the definition of the event changes, the state comparison change must only be made once wherever that event is firing, and this can ensure the execution side will still remain functional because it’s independent of that state definition. Further benefits that are gained from a horizontal scaling perspective include: staying within the boundaries of apex governor limits. This is challenging in an object-central DML model because the process is competing within the same limits as every other process associated to that same object and within larger organizations this can push close to the 10 second execution timeout limit. Processing these changes asynchronously as events significantly reduces any risk of cpu time out errors.

2) Asynchronous Threads – Instead of having to add more functionality centralized around an object, a new subscriber can be added to that event and it will run in its own thread asynchronously. This is very different behavior than adding a new trigger to an object because those will run synchronously in a random order. Having multiple triggers for a single object is often considered working against best standards. However, having multiple triggers off an event with their own siloed contexts is exactly the design pattern that is desired in an Event-Driven architecture—multiple disparate systems/processes all listen to the same event and operate mutually exclusively to each other. If there is any dependency between processes a defined order of execution can be created by simply firing a second event from the first subscriber and having a dependent process subscribe to that.

3) External Systems – Systems outside of Salesforce can also listen to these events and perform their own business logic by leveraging the streaming api and subscribing to your custom events. For example if an ERP needed to generate an invoice based off a deal closure in Salesforce, the event could pass the customer information and deal information in the platform event and have the ERP listen for this event via Salesforce’s Streaming API. When it receives the message it then generates the invoice for the customer specified in the event information.

Use Case

In order to show how to switch over, let’s take an example business process and break it out into events and subscribers.

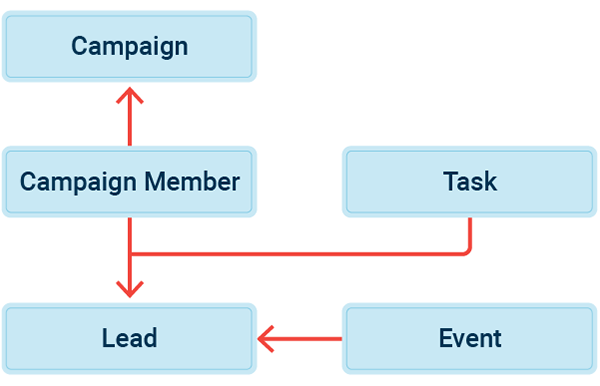

In this example, consider the use case of a Lead being created and getting assigned via standard lead assignment rules, but additional external impacts are expected to change the ownership of the lead in this priority (where 3 is the highest):

- Assign the Lead to the BDR queue when a Call type Task marked as Completed

- If an event is added to a Lead and marked completed, assign lead over to Account Executive for that region

- If a campaign with a value of 100K gets associated to a lead, assign that lead to a High Priority BDR queue

To model this business process, it would require a trigger on the Campaign Member object, the Task object and the Event object all doing similar checks, and running DML operations on the Lead object. This might not seem cumbersome, but imagine it as one of many processes in the grand scheme of your organization and now you’ve added processing delay to a few high volume objects such as Event, Task, Campaign Member, etc.

An improvement on this would be to fire a future method to handle the action asynchronously, but there are some caveats to this:

- You cannot call any more future methods within your newly created context. (Note – you can chain queueables to call future, but that can be messy unless you are using a proper asynchronous framework.)

- You are also now limited to handling this business logic with code, as you can’t execute future logic with a flow/process builder.

- You are limited to executing 50 of them within a single synchronous execution context, so as your org grows this methodology will not scale well.

Considering the requirements from the perspective of events, the process can become much easier. An event named “Lead Reassign” can be defined and fired on the following conditions:

- On Task tied to Leads of type Call and status is marked complete

- On Events tied to Leads marked as complete

- On Campaign member tied to lead with a campaign value >100k

One subscriber with either a flow/process builder or apex trigger on the “Lead Reassign” event can then be created. Within this logic, we check the priority sent in the event message and can either have the previous priority state saved on the lead record itself, or in this case, check who owns the lead and derive what priority the last assignment was (i.e. if owned by a member of the High Priority BDR Queue assume priority level 3). Then check if the event priority is greater than the previous priority and if so, update the Lead owner.

What makes this so nice is that all the logic of querying the lead(s) and determining previous priority and what should be re-assigned happens in a separate context from the one that triggered the event, so from an end-user’s perspective there is no waiting period while the calculation happens.

Summary

In summary, Platform events give an excellent alternative to processing defined business logic that can run asynchronously. Its high hourly base-limit of 250,000 messages published with the option to purchase additional bandwidth gives a strong basis for scalability. Defining subscribers allows you to build with event-triggered business logic which in turn, allows for object-agnostic code. Platform events support not only apex, but can also be triggered by Flows and process builders allowing administrators to work with an event-driven architecture and optimize their process.

Have Questions About Salesforce Events and Architecture?

We hope you find the actionable insights provided here to be helpful. Have questions about this blog, Salesforce architecture or Platform Events? Sign up for our newsletter! We send out a monthly recap of our latest Salesforce content, including articles on security best practices, actionable insight on Salesforce optimization for enterprises, and more.